3.6 saw~onepole~noise~click~

3.6.1 saw~onepole~noise~click~b2.maxpat

Another piece that I composed in 2010, which combines a predetermined rhythmical structure with randomised pitch content, has the title saw~onepole~noise~click~ (which is easier to say if one omits the tilde from each word). As with sub synth amp map (§3.3) the title here describes what the piece is based upon; in this case by taking the names of the MaxMSP objects that are at the heart of the soundmaking in the system. Another similarity of this work to that (§3.3) is the context of a live coding frame of reference during the genesis of the piece, though the patch has then undergone a number of revisions beyond its inception. Whereas a live coding performance version of this piece (if one were to write a text score describing such a thing) would have the structural form of elements being added incrementally, the piece as it stands has its own (open outcome) form derived by its fixed state. The final version of the piece is saved as saw~onepole~noise~click~b2.maxpat.

This piece was actually started shortly before the construction of the model of the gramophone, but, for the purposes of narrative within this document, the present work is described in the context of the later. In both g005g and saw~onepole~noise~click~ there is found a primary soundmaking process that is augmented by a secondary DSP subsystem. The primary soundmaking process in g005g is the conceptual model of a gramophone, and the secondary DSP is the 'corrective' bi-polarising stage (see §3.5.4.c); in saw~onepole~noise~click~ the primary soundmaking process is inspired by the physical modelling of the well known Karplus-Strong (K-S) algorithm (Karplus and Strong, 1983), and the secondary processing, rather than being a constant on the output, is one that takes short recordings of audio data at specific points for later playback within the piece. This is a composition that is similar to g005g, both in that it exists as a maxpat containing a green button on which to click to begin the performance, and that it will play differently-but-the-same each time. Each rendition of saw~onepole~noise~click~ will yield a different set of values that manifest during the piece; soundmaking parameter values are not listed, stored, and stepped through as they are in g005g, but are instead chosen at the moment when they are to be acted upon.

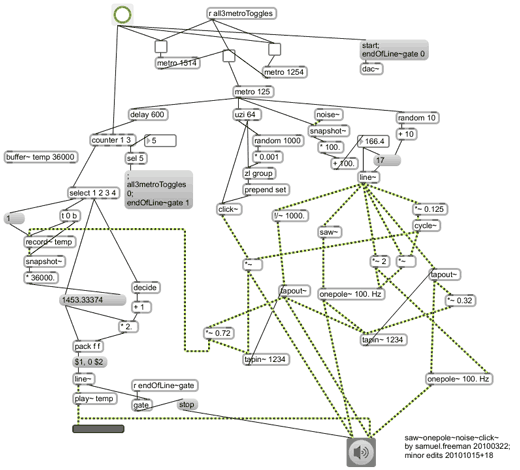

Whereas g005g incorporates the concept of scored elements – the collections of value-duration pairs that are cycled through – within itself, the saw~onepole~noise~click~ maxpat is thought of as being the entirety of the 'score' for the piece that is (to some extent) human readable (though not humanly playable in any practical sense). It is a relatively small patch in which all the objects and connections can be seen within a single view (see Figure 3.37 below). A suitably experienced reader may construct a mental projection of how the piece will sound just by 'reading' the visual representation of the work that is the patch, in much the same way that a classically trained instrumentalist may imagine the sounding of notes from the silent reading of a CMN score.

The visual manifestation of the maxpat, as in Figure 3.37, represents the sound-work which is manifest as audio signals (stereo) in a DAC of the computer that is running the software to perform the piece. The compositional motivations behind the various parts of the system will be described, here, leading to the concept and context of software as substance, explored in §3.7 which concludes this chapter.

3.6.2 The domain dichotomy

One of the aesthetic motivations explored by this piece is to do with the dichotomy of time-domain and frequency-domain thinking when it comes to making and processing sound within software. Lines of thought on this are found at different levels of abstraction: in relation to the traditional conception of music comprising the primary elements of rhythm and pitch; that one may contemplate, and play upon, the difference between units of duration and units of frequency (simultaneously using a value, x, both as x milliseconds and as x Hertz); the sound synthesis in this piece combines both impulse-based and sustained-tone-based signals. Further variations of the idea follow in the description below, and the domain dichotomy theme remains as an undercurrent to all subsequent works described by this thesis.

For each of the four MaxMSP objects that are named in the title of this piece (saw~, onepole~, noise~, and click~) Figure 3.38 demonstrates some time- and frequency-domain conceptual abstractions :

-520x670.png)

Figure 3.38: saw~onepole~noise~click~(domainConcept)

3.6.3 Temporal structure

[n3.44] On the one hand, the time interval values given to these three metro objects are arbitrary; on the other hand, the temporal resolutions were specifically selected for the type of rhythmical interaction that I knew they would create.

There are three metro objects that cause things to happen within the piece; they may be referred to as A (metro 1254), B (metro 1514), and C (metro 125).[n3.44] The bang messages that are output both by A and by B are used to toggle the on/off state of C. The output of C has four destinations; three comprise the triggering of a sounding event (see §3.6.4), and the fourth is used to determine whether or not more than 600 milliseconds have passed since the previous output of C. If the delay between C bangs is greater than that, then a counter (bound to cycle from one to two to three to one and so on) will be triggered to increment.

When the output of the counter is one, audio data (taken from a point within the main soundmaking section) begins to be recorded to buffer~ temp; when the counter is two that recording is stopped and the duration of that recording is stored; on reaching three the counter will first output a 'carry count', which is the number of times that the counter has reached its maximum since being reset when the piece was started: if the carry count is five then the 'end of line' gate is opened, and in any case the replaying of the recorded audio data is triggered. Playback of the recorded audio data will always be 'backwards' (this is what the duration value stored at counter-two is used for), but may either be at the same rate of play at which it was recorded or at half that speed. If the 'end of line' gate is open at the end of the audio data playback then the piece ends simply by stopping the DSP (which implies the assumption that MaxMSP is not being used for anything other than the performance of this piece).

In the 'saw~onepole~noise~click~' folder of the portfolio directories there is an 'eventTrace' text file that was saved from the Max window after running saw~onepole~noise~click~b2(hacked).maxpat which has had print objects added at various points in the patch, including to give a trace of the recorded durations from the buffer DSP section described above. The first 20 lines of that text file are as follows:

START: bang A: bang B: bang A: bang C: bang C: bang C: bang B: bang counter: 1 A: bang C: bang C: bang C: bang C: bang C: bang B: bang counter: 2 recDur: 1502.667099 A: bang C: bang

The starting bang switches both A and B on which means that C is both switched on and then off again within the same 'moment'. C is switched on again by A, 1.254 seconds later, and is able to bang three times before B switches it off. The pause at this point is long enough to trigger the counter, and recording begins. After the next bang from A, C triggers five times before B fires and again the counter ticks; line 18 of the 'eventTrace' text tells us that just over 1.5 seconds of audio data – capturing the output of the last five sounding events – has been recorded. Onward goes the procession, and the timing is always the same. The distribution, and values of, the 'recDur' lines are of interest in terms of the compositional decision to end the piece after 15 ticks of the counter, whereas the first version of the piece stops after 30.

3.6.4 Sounding events

(3.6.4.a) Triggering sound

The first three destinations of each bang message from metronome C trigger what may, in traditional terms, together be called a new note within the piece; the three parts of these sounding events are: (1) an onset ramp duration in range 10 to 19 milliseconds, selected via random and held for use with line~; (2) a value in range 0 to 200, selected via noise~ and ramped to by line~ over time of the held duration: there are six destinations for the signal output from line~ which is interpreted both as a frequency and as a duration (see below); finally (3) an impulse of 64 samples in range zero to one, selected via random, is used with click~ to give each sounding event a percussive attack that is extended through a short and pitched decay by a K-S inspired model. The synthesis can be though of as having two 'inputs': the impulse and the continuous control signal.

(3.6.4.b) Synthesis flow

Above were introduced the roles of the noise~ and click~ objects; the roles of saw~ and onepole~ are illustrated, below, in a flow-chart representation of the main soundmaking part of the saw~onepole~noise~click~ system (Figure 3.39): saw~ is the 'saw wave oscillator', and onepole~ is the low-pass (LP) filter, of which there are two. There are also two delay lines within the system; in Figure 3.39 they are described one as being of the frequency-domain, and the other of the time-domain. The former uses the control signal as a frequency value and takes the period of that frequency as the delay time; the later uses the control signal directly to set the delay time.

Due to the interactivity of the elements, the flow-chart representation of the synthesis here appears almost as complicated as the maxpat itself (Figure 3.37, above), but the flow-chart does make more explicit some of the thinking behind work by showing the algorithm at a higher level of abstraction.

3.6.5 Scored recordings

It has already been stated that the maxpat constitutes a complete 'score' for this piece of music; as a visual description of the work it conveys precise information for the processes of soundmaking and temporal organisation. Pitch, on the other hand, is only specified as a fundamental frequency bandwidth (in range 0 to 200) that is quantized only to the resolution of the DSP bit-depth.

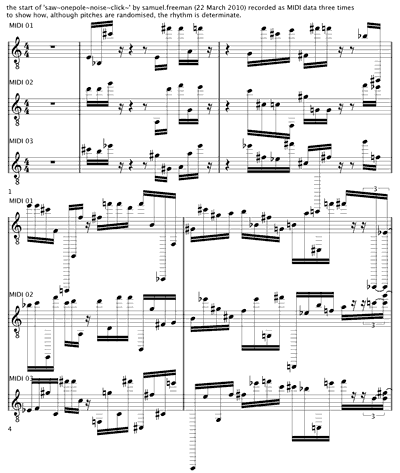

A copy of the maxpat was modified to give an output of the sounding events approximated as MIDI notes. Three renditions of the piece were then recorded as MIDI data into Cubase (Steinberg), and from which a CMN score was prepared[n3.45] in order to show how those three recordings have identical rhythmic content. An excerpt, from the start of the piece, is shown in Figure 3.40.

[n3.45] Printed to pdf file, see saw~onepole~noise~click~b.pdf within the portfolio directory.

Perhaps it was polemics that motivated the production of a CMN score from this piece; not only was I curious to see how the DAW software would render the information given to it, but also to see how my feelings toward the musical assumptions that underpin the work would be affected. The CMN produced includes only representation of the rhythmical content and the approximate pitches that were played in the three renditions that were recorded as MIDI data. For demonstration of the rhythmical sameness between recordings the CMN serves well; maybe even better than would waveform or spectrogram representations, but none of these were used during the composition of the work.

As a piece of computer music, saw~onepole~noise~click~ stands as an exploration of a generative rhythmic engine playing a 'software instrument' which has conceptual depths that exceed those of the programming which expresses them. Within the technical exploration of controlled soundmaking is the aesthetic engagement with the domain dichotomy (§3.6.2), and beyond that the additional dichotomy of score and instrument is brought to attention.

-520x341.png)